| HOME | CONSULTING | PUBLICATIONS | PROJECTS | CV (PDF) |

|

Spatial OSC A Spatialized Sound Server and Protocol for MAX/Msp Using Open Sound Control |

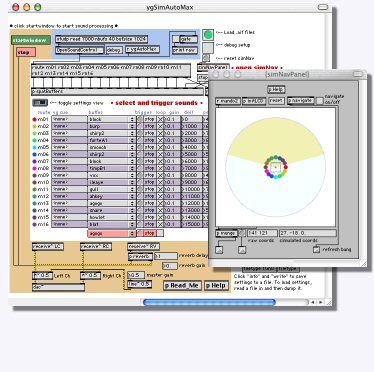

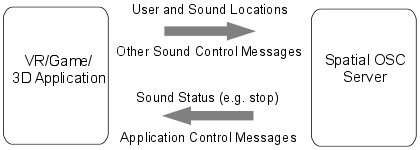

Paul Hertz and I collaborated to create a MAX/Msp sound server for use with the Ygdrasil virtual reality software. I replaced the existing protocol between Ygdrasil and our Linux sound server with one based on Open Sound Control (OSC). The resulting architecture greatly increases the audio fidelity of applications and the ability of virtual world developers to control custom parameters within MAX/Msp.

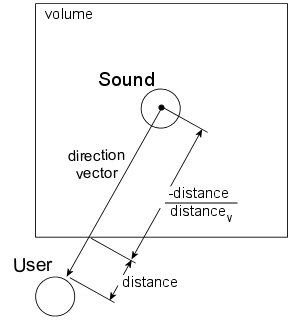

| Protocol Open Sound Control is a popular protocol for communication between sound devices. It encapsulates a time stamp for each message in order that the receiver can execute the messages in sequence at the proper time. The OSC protocol is used to instantiate sounds on the sound server and adjust their properties in real-time. A number of properties including play, stop, loop, fall off distance and spatialization (e.g. linear, squared law) are set along with real-time updates of sound position within the world. |

|

| The Ygdrasil sound nodes were extended to allow general messages to be send to the sound server. This allows users to create custom sound functionalities using the MAX/Msp software and control them from within the VR application. Messages updating the status of sounds (e.g. playing, stopped) are returned from the sound server. Messages can also be delivered via the protocol to control parameters of the Ygdrasil sounds or any other object within Ygdrasil. | |

|

|

RELATED LINKS

Paul Hertz' website.

The Ygdrasil website.

Bergen client C++ abstraction library to construct and manage sound objects.

The full messaging protocol listed below in PDF format.

MORE DETAILS

Messaging Protocol

The Open Sound Control protocol uses a concept of messages and arguments.

The following table details the full messaging protocol employed between clients and the MAX/Msp sound server:

| send message | arguments | return message | arguments | comments |

| server | "ping" | client | "pong" | send ping <string> to initialize client to server communication |

| server | "path" <string> | a colon separated search path for sound related files | ||

| server | "gain" <float> | set the overall server gain | ||

| server | "reset" | clear the voice list and prepare to initialize communication | ||

| server | "kill" | intstruct the server program to terminate | ||

| server | "listener" | client | "handle" <int> | create a listener and return a positive handle number (optional) |

listener |

<int> "position" <float> <float> <float> | set listener position relative to origin in OpenGL coordinates | ||

listener |

<int> "kill" | kill the listener with the given handle number | ||

| server | "sample" <string> [<int>] | client | "handle" <int> | create a sample file object and return handle [listener handle] |

object |

<int> "loop" <int> | set the looping status of the sample | ||

object |

<int> "play" | play the buffer from the current position | ||

object |

<int> "pause" | stop the buffer at the current position | ||

object |

<int> "stop" | stop and reset the buffer position | ||

object |

<int> "amplitude" | set the sound amplitude [0:1] | ||

object |

<int> "attenuation" <int> | set distance attenuation model* | ||

object |

<int> "referencedistance" <float> | distance at which sound has full amplitude (default 0.0) | ||

object |

<int> "falloffdistance" <float> | distance at which sound has zero amplitude | ||

object |

<int> "fallofffactor" <float> | divisor for factored attenuation models | ||

object |

<int> "mingain" <float> | minimum calculated amplitude (default 0.0) | ||

object |

<int> "maxgain" <float> | maximum calculated amplitude (default 1.0) | ||

object |

<int> "position" <float> <float> <float> | set sound position relative to listener in OpenGL coordinates | ||

object |

<int> "distance" <float> | set sound distance relative to listerner (optional)† | ||

object |

<int> "kill" | kill the sound object with the given handle number | ||

| client | "stop" <int> | inform the client the sample has ended (not looped) | ||

| server | "tone" [<int>] | client | "handle" <int> | create a tone object and return handle [listener handle] |

object |

"frequency" | set tone frequency | ||

| (also receives all messages between "play" and "kill" above) | ||||

| server | "whitenoise" [<int>] | client | "handle" <int> | create a white noise object and return handle [listener handle] |

| (also receives all messages between "play" and "kill" above) | ||||

| server | "recordfile" <string> [<int>] | client | "handle" <int> | create record-to-file object and return handle [listener handle]‡ |

object |

<int> "record" | begin recording line-in input to buffer | ||

| (also receives all messages between "pause" and "kill" above) | ||||

| server | "amplitude" [<int>] | client | "handle" <int> | create an amplitude object to monitor line-in [listener handle]‡ |

object |

<int> getamplitude" | object | <int> "amplitude" <float> | request the current amplitude of the line-in |

| (also receives all messages between "play" and "kill" above) | ||||

| server | "ratsource" [<int>] | client | "handle" <int> | create RAT source object and return handle [listener handle]± |

object |

<int> "source" <string> | set the source SSRC identifier | ||

object |

<int> "enable" <int> | set the 3D spatialization status of the source (o:off,1:on) | ||

| (also receives all messages between "play" and "kill" above) |

notes:

*attenuation models are 0:none,1:linear falloff, 2:inverse square law, 3:linear falloff by factor, 4:inverse square law by factor, 5:inverse square law clamped beyond falloff distance

†distances in the range [-1:0] reflect attenuated directionalization at full amplitude

‡recording amplitude is spatialy modulated by the attenuation model and relative distance to the listener

±this functionality is only supported by Bergen Server and not by the MAX/Msp server (see Space RAT)